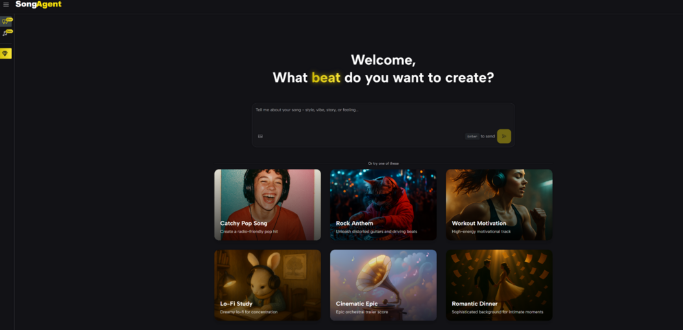

The prevailing frustration with generative media is the lack of steerability. For years, the standard interaction model has been a “black box”: a user inputs a text prompt, the machine rolls a probabilistic die, and the output is a finished audio file that is either perfect or useless. There was no middle ground for adjustment. The emergence of the AI Song Agent marks a divergence from this “slot machine” methodology. By introducing an intermediate architectural layer between the prompt and the audio rendering, this system addresses the primary failure point of earlier models: the inability to plan a composition before executing it.

The Engineering Behind Intentional Composition

Music is not merely a sequence of harmonious frequencies; it is a structured language with rules regarding tension, release, and temporal organization. Standard large language models (LLMs) adapted for audio often struggle with long-term coherence, “forgetting” the motif of the intro by the time they reach the chorus.

Deconstructing The Blueprint Architecture

The agentic approach solves this by separating the composition phase from the production phase. When a user engages with the system, the AI does not immediately generate sound. Instead, it acts as a composer sketching a score. It defines the constraints—key signature, time signature, instrumentation, and section length—explicitly. This “Musical Blueprint” serves as a contract between the user and the machine, ensuring that the subsequent audio generation is bound by strict theoretical parameters rather than random probability.

Analyzing The Iterative Refinement Capability

In my testing of the platform, the most significant shift is the ability to intervene. Because the system understands the track as a collection of components (drums, bass, harmony) rather than a single flattened waveform, users can request specific alterations. A request to “change the drums to a half-time feel” is interpreted logically, modifying only the rhythmic structure while preserving the melodic integrity. This moves the workflow closer to a traditional Digital Audio Workstation (DAW) experience, albeit one controlled by natural language.

Operational Logic Of The Agentic System

The functionality here is best understood not as a creative magic trick, but as a logic gate that filters abstract requests into concrete musical data.

Phase 1: Semantic Translation And Structural Planning

The process begins with the extraction of musical intent. If a user describes a “melancholic rainy day,” the agent translates this into “Minor Key, Slow Tempo, Lo-Fi Texture, Minimal Percussion.” It presents this translation back to the user for validation. This step prevents the waste of computational resources on generating tracks that fundamentally misunderstand the user’s goal.

Phase 2: The Constrained Generation Protocol

Once the blueprint is ratified, the audio engine generates the sound. Unlike “one-shot” generators, this engine is constrained by the blueprint. It cannot randomly switch keys or change tempos unless explicitly programmed to do so. This constraint ensures a higher hit rate for usable, professional-grade audio, as the “randomness” is confined within safe theoretical boundaries.

Comparative Analysis Of Generative Architectures

To visualize why this architectural shift matters for professional use, we can contrast the “Black Box” model with the “Agentic” model.

| Technical Variable | Standard “Black Box” Models | Agentic Blueprint Models |

| Data Structure | Flattened Waveform Prediction | Hierarchical Component Planning |

| User Control | Input Only (Prompt) | Input + Intermediate Validation |

| Error Handling | Regenerate Entire Track | Modify Specific Parameter |

| Long-Term Coherence | Low (Drifts over time) | High (Adheres to structure) |

| Theory Adherence | Probabilistic / Random | Rule-Based / Strict |

The Shift From Novelty To Utility

The value of this technology lies in its reliability. For a sound designer or a video editor, a tool that produces a “surprising” result is a liability. They require predictable, high-quality assets that fit a specific hole in their timeline. By prioritizing structure over randomness, the system sacrifices the “magic” of unexpected results for the utility of consistent, usable professional audio.